Why IREN Is Buying 50,000 GPUs Without a New Contract

Inside the strategy behind IREN’s massive GPU expansion.

The market reaction to IREN’s latest announcement seems to focus almost entirely on the size of the $6B ATM, with many investors immediately calling it “astronomical dilution.” Honestly, that part did not shock me nearly as much as the discussion suggests. Large infrastructure platforms rarely finance expansion in a single step. Equity programs like this are typically used gradually and often tied to the timing of hardware deliveries and infrastructure build-out.

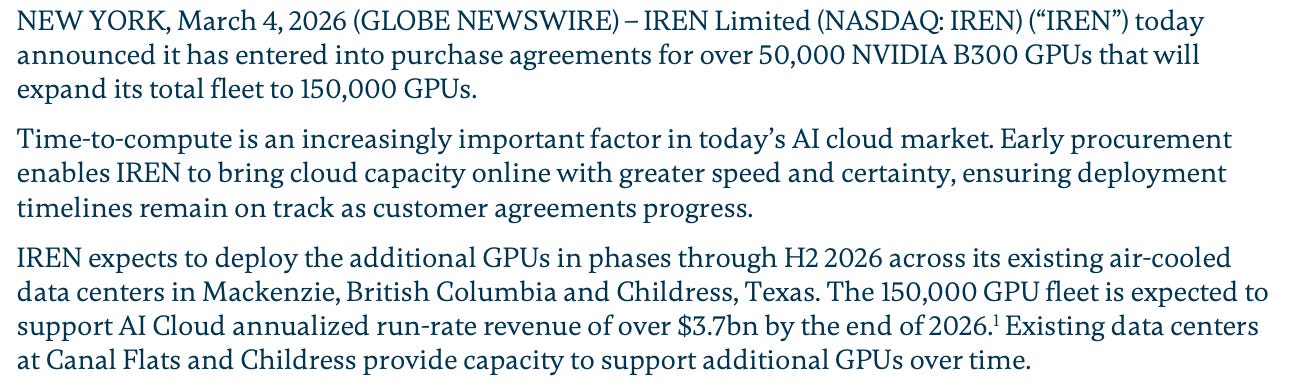

In this case, IREN also structured GPU purchases on a post-shipment payment basis, meaning the company does not need to fund the entire hardware order upfront. From a financial standpoint that matters a lot. In the current AI infrastructure market, GPUs, networking equipment and even certain memory components remain constrained. In that kind of environment, securing supply early can be more important than waiting for perfect financing conditions.

Another argument circulating online is that IREN is buying “outdated GPUs.” That claim seems exaggerated. The company is ordering Blackwell Ultra (B300) systems, which are expected to remain one of the main AI training platforms through at least the 2026–2027 cycle. Even hyperscalers are deploying large Blackwell clusters today. Rubin will represent the next architectural step, but it will require different infrastructure, significantly higher rack power density and a new generation of systems. In other words, the current deployment looks much more like a timing decision than a technological mistake.

That said, one of the more nuanced criticisms deserves attention. A significant portion of the new GPU fleet appears to be deployed in existing air-cooled data centers, including facilities in British Columbia and parts of Texas. Air cooling has clear advantages from a capital perspective. Building liquid-cooled hyperscale facilities is extremely expensive, and estimates suggest that using air-cooled infrastructure can save roughly $5–7 million per megawatt of infrastructure cost. When companies scale hundreds of megawatts of compute capacity, those savings become meaningful.

The trade-off is rack density. Air-cooled racks support significantly less power per rack than modern liquid-cooled AI clusters. That means fewer GPUs can be installed per rack, and over time this may result in less efficient infrastructure compared with extremely dense liquid-cooled deployments. If the industry shifts toward very high-density clusters for next-generation training workloads, some air-cooled sites could become less optimal. In other words, this strategy prioritizes speed and capital efficiency today, but potentially sacrifices some long-term density advantages.

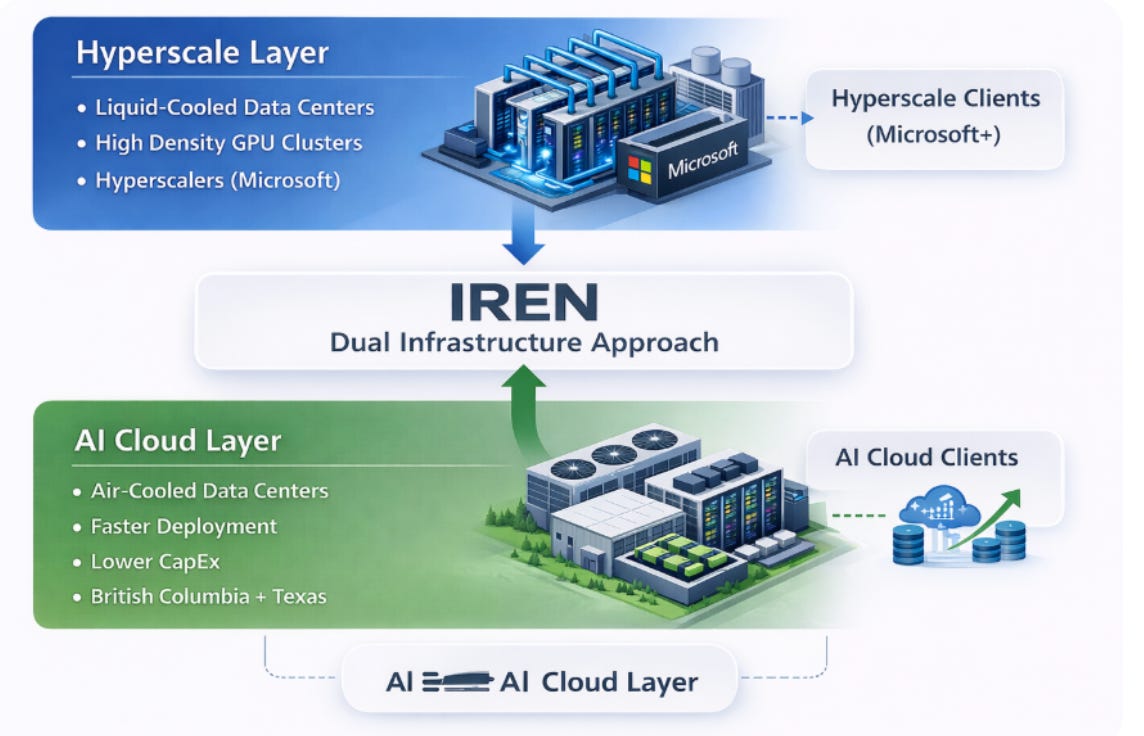

The Microsoft contract provides another important reference point. IREN signed a $9.7 billion multi-year AI cloud agreement expected to generate approximately $1.9 billion in annual run-rate revenue once fully deployed. The agreement also includes a 20% prepayment, which effectively provides meaningful upfront capital to support the infrastructure build-out. At the project level, the economics appear extremely strong, reflecting the pricing power that can exist when AI compute supply is constrained.

However, it is important not to misinterpret those economics. Strong project-level margins do not automatically translate into extremely high margins for the entire company. Once GPU depreciation, infrastructure costs, financing expenses and corporate overhead are included, company-level margins will inevitably look much lower.

This is where comparisons with other AI infrastructure companies become useful. Michael Intrator from CoreWeave has indicated that once their data centers stabilize and reach full utilization, platform margins could settle somewhere in the mid-20% range. That type of margin profile is actually typical for capital-intensive infrastructure businesses. It also illustrates how very strong unit economics at the GPU cluster level can translate into more conventional margins once the entire platform cost structure is considered. And let’s take into account that IREN is vertically integrated company with lower costs.

Another interesting signal about demand came recently from NBIS, whose management suggested that demand for AI compute is strong enough that providers can raise prices significantly and still receive full prepayment from customers. If that statement reflects broader market conditions rather than a single company’s experience, it reinforces the idea that the real bottleneck in the industry may not be software or models, but rather access to GPUs, power capacity and operational infrastructure.

One aspect of the IREN story that deserves more attention is the company’s infrastructure architecture. The Microsoft deployment is clearly described as being built in liquid-cooled data centers at the Childress campus, delivering approximately 200 MW of IT load across Horizon 1–4. This infrastructure is designed specifically for hyperscale deployments and supports extremely high rack densities required for large AI training clusters.

By contrast, the newly announced GPU expansion appears to target existing air-cooled facilities in British Columbia and parts of Texas. These deployments are architecturally different from the hyperscale Microsoft cluster.

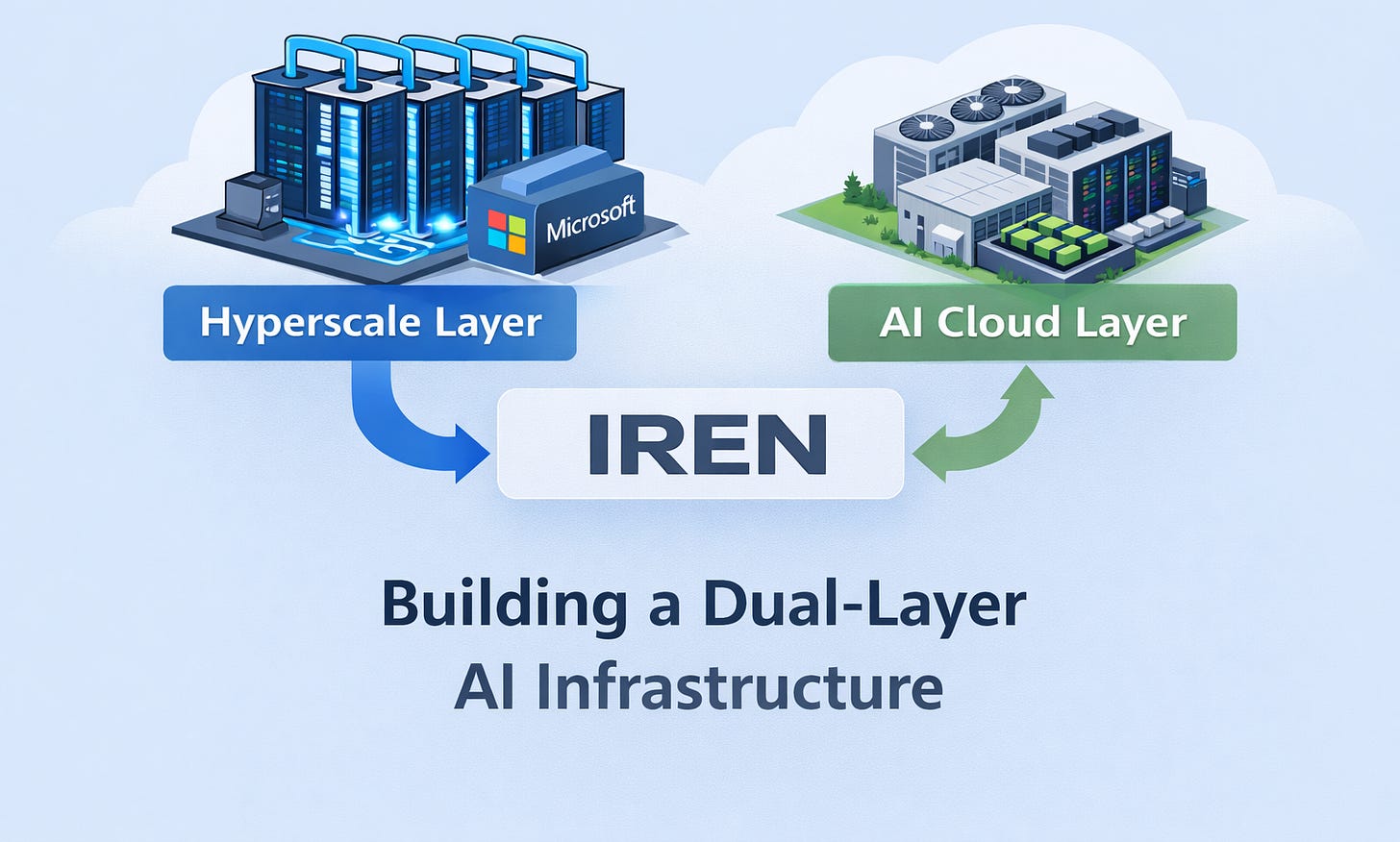

Taken together, this suggests that IREN may be building two distinct layers of infrastructure.

The first layer is hyperscale infrastructure, built around liquid-cooled data centers designed for very large long-term contracts with hyperscale partners.

The second layer appears to be IREN’s own AI cloud capacity, which can be deployed more quickly using existing air-cooled data centers. This infrastructure allows the company to scale compute capacity faster and at lower capital cost while serving a broader range of AI workloads and customers.

In practice this creates a two-tier model.

Liquid-cooled clusters support hyperscale training deployments and large strategic contracts, while air-cooled capacity allows the company to expand its own AI cloud platform more quickly.

From a valuation perspective, the company recently increased its long-term AI Cloud ARR outlook by roughly $300 million. How much market value that additional revenue translates into depends heavily on how investors classify the business.

If the company is valued primarily as infrastructure similar to traditional data center operators, multiples could fall in the 6–8× ARR range, implying roughly $1.8–$2.4 billion of incremental value. If investors begin to view the platform more like a high-growth AI infrastructure provider, multiples could reach 12–15× ARR, implying perhaps $3.6–$4.5 billion of additional valuation.

In that context, the additional revenue potential could theoretically offset a meaningful portion of the dilution over time.

There is also a longer-term technology risk that cannot be ignored. If the industry rapidly transitions to significantly more efficient GPU architectures with dramatically higher rack densities, some Blackwell-based infrastructure built today could become relatively less competitive. That does not necessarily make the current investment obsolete, but it is a factor investors will need to watch closely over the next several years.

Ultimately, IREN appears to be attempting something ambitious: transitioning from a smaller operator into one of the larger independent AI compute platforms. If the company successfully deploys the planned GPU fleet and demand for AI compute remains strong, the resulting platform could become a meaningful player outside the hyperscalers.

At the same time, the strategy clearly carries risks. Heavy capital requirements, dilution, infrastructure design trade-offs and the need to secure additional large customers will all shape the outcome.

Which brings us back to the question that still hangs over the entire story.

If demand for AI compute is as strong as many industry participants suggest, the next thing investors will want to see is additional large customer contracts that confirm the newly built capacity will be fully utilized.

Until those contracts appear, the debate around the company will likely continue.

This publication is for educational and informational purposes only and does not constitute financial, investment, or trading advice. Readers are solely responsible for their own investment decisions. The author may hold positions in the securities mentioned.